You will be creating a bash script that will scrape the URLs of tweets from Twitter Bookmarks and saves them in a markdown file.

Objective

Project Context

Web scraping, web harvesting, or web data extraction is data scraping used for extracting data from websites. In general, web data extraction is used by people and businesses who want to make use of the vast amount of publicly available web data to make smarter decisions.

In this project we will be scraping Twitter and collecting the URL of the tweets from the Bookmarks page and we will be doing this with simple command line tools such as cURL and jq.

[Disclaimer: Web Scraping has to be used only for learning purposes. Any other attempts to use the data scraped might result in legal action or Your IP might get blocked.]

Project Stages

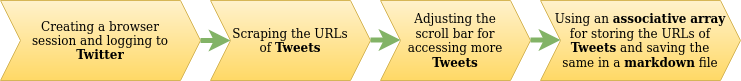

The project consists of the following stages:

High-Level Approach

- Configure the required web driver to access the browser capabilities in testing mode.

- Open up the Twitter login page in a browser session.

- Sign in to a Twitter account using your credentials, passed on using appropriate cURL commands.

- Scrape the URLs of tweets and redirect the same to a markdown file.

Objective

You will be creating a bash script that will scrape the URLs of tweets from Twitter Bookmarks and saves them in a markdown file.

Project Context

Web scraping, web harvesting, or web data extraction is data scraping used for extracting data from websites. In general, web data extraction is used by people and businesses who want to make use of the vast amount of publicly available web data to make smarter decisions.

In this project we will be scraping Twitter and collecting the URL of the tweets from the Bookmarks page and we will be doing this with simple command line tools such as cURL and jq.

[Disclaimer: Web Scraping has to be used only for learning purposes. Any other attempts to use the data scraped might result in legal action or Your IP might get blocked.]

Project Stages

The project consists of the following stages:

High-Level Approach

- Configure the required web driver to access the browser capabilities in testing mode.

- Open up the Twitter login page in a browser session.

- Sign in to a Twitter account using your credentials, passed on using appropriate cURL commands.

- Scrape the URLs of tweets and redirect the same to a markdown file.

Creating a Browser Session

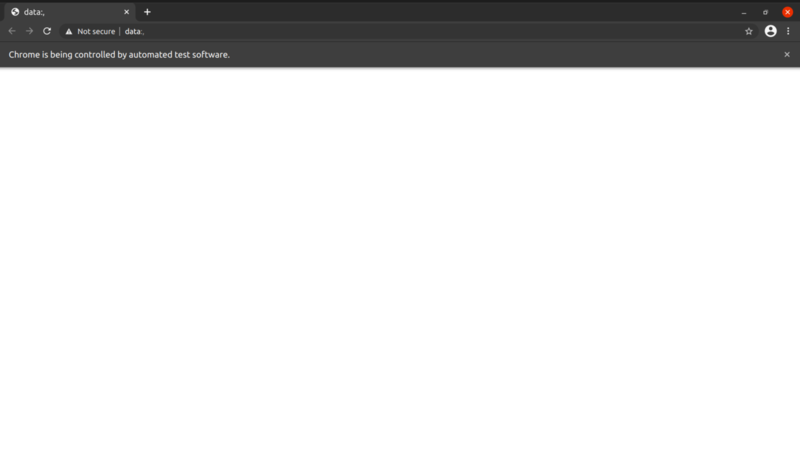

First we need to start the Browser Session so that we can give commands to that Browser Session and then work with the output given by it. First we need to download the ChromeDriver and then run the ChromeDriver Server as a background process so that we can give further commands to the shell on the same tab.

Requirements

- You will need to have Chrome Browser as it is very reliable.

- Download the appropriate ChromeDriver that is according to your Chrome Browser Version.

- Run the ChromeDriver Server as a background process so that we can give further commands to the shell on the same tab.

- Create a Browser Session using

cURLcommand and save the sessionID in a variable as it will be needed in every command you will use. - Since the ChromeDriver Server gives output in JSON format you may need a

jqcommand for parsing the JSON.

Bring it On!

- Can you open the Browser Session in headless mode? You can use this feature to do scraping without even the Browser popping in front of you.

Expected Outcome

You would be able to see the Chrome Browser(if headless is not passed as argument when creating a Browser Session) pop up in front of your screen and terminal waiting for the next command.

It would look like this:

Signing in to Twitter Account

Before we go to the bookmarks section of Twitter and start Scraping we first need to sign in into our Twitter Account. For doing so first we need to go https://twitter.com/login and then select the input fields and put appropriate values into that fields and then search and click the submit button.

Requirements

- First you need to move to https://twitter.com/login by sending a request to browser session using appropriate cURL command.

- After that try to select the input fields and then send values to the field using the appropriate cURL commands.

- Select the submit button and then click the button using appropriate command.

References

Tip

- Use xpath for searching the element.

Expected Outcome

After completing the requirements, you would be signed in with your Twitter Account and can now move on to the next module where we do scraping.

Scraping URL of tweets

In this unit we will scrape the URL of the tweets present on https://twitter.com/i/bookmarks. For this first we need to move to this page and then smartly locate the elements where URL of the tweets are present.

Requirements

- First move to https://twitter.com/i/bookmarks using the command you used in the previous milestone.

- Then try to search for all the elements on which URL of the tweets are present.

Bring it On!

- Try to locate and scrape the whole tweet i.e account name who posted the tweet, content of the tweet, date when posted, number of likes, number of comments and number of retweets for that tweet.

Expected Outcome

After fulfilling all the requirements you would be able to grab URL of the tweets present on the screen.

Moving the scroll bar slowly for grabbing all the tweets

Requirements

- Execute a JS command in the browser to move the scroll bar downwards.

- Compare the previous position of the scroll bar to the current position of the scroll bar to determine whether the scroll has moved or not. Keep in mind that internet might be slow on some systems so even if we have tweets left and we move the scroll bar it won't move.

Expected Outcome

After fulfilling all the requirements you would be able to grab URLs of all the tweets that will be saved in a markdown file.

Use associative array to store the URLs & saving URLs in a markdown file

In this section you will be using associative arrays since when you scroll down you get the same element twice or thrice depending upon how much you scroll down. So with the help of associative array (can be used as set data structure in Bash) we can keep only unique URLs in the array. After that we will save them in a markdown file.

Requirements

- Use associative array to store unique URLs.

- You can save the URLs in a file using redirection.

References

Bring it On!

- Can you think of any other way of making a markdown file with unique URLs without using associative arrays.(HINT: You should look into

uniqcommand of bash)

Expected Outcome

After fulfilling all the requirements you would be able to save the URL of all the tweets in a markdown file.