Most programmers often feel the need to sort the data provided to them. Sorted data is easier to understand and process.

As resources become increasingly limited and time more valuable, the most efficient sorting mechanism must be implemented based on the characteristics of the data provided.

Various types of sorting mechanisms include:

- Quick Sort

- Bubble Sort

- Merge Sort

- Insertion Sort

- Selection Sort

- Heap Sort.

- Radix Sort

- Bucket Sort

This blog covers the fundamentals to get you started with insertion sort. Before moving forward, make sure you have gone through the earlier blog on 10 best sorting algorithms to easily grasp the following sections.

Why insertion sort?

Before diving into the technical details of insertion sort, consider this example:

You and your pet dog (let’s call him Fluffy) are participating in a competition.

To win, Fluffy must run to a basket, pick a bone and give it to you, while you need to arrange them in increasing order of length, all of it as quickly as possible.

Suppose Fluffy takes 60 seconds to bring a bone, and you need 10 seconds to sort it into its proper position. One approach would be to wait for Fluffy to stack all the bones near you and then sort them.

For 10 bones, this approach takes (60+10)*10 = 700 seconds.

Another possible approach would be to sort each bone as it comes into its proper position. This approach takes 60*10 = 600 seconds. That is 1 min 40 sec lesser! Yay!

Insertion sort does the same. It places a new bone in its proper position in an already sorted array (or subarray, as we will see further).

Now replace the dog, bone, and yourself with a hard drive, data, and a CPU. While transferring data from a slow hard drive to a CPU, data transfer may take more time than data processing at the CPU. Hence, to avoid wastage of CPU cycles, it is good to keep sorting data alongside and add new data to the sorted data.

Now let us study the process of insertion sort in detail.

What is insertion sort?

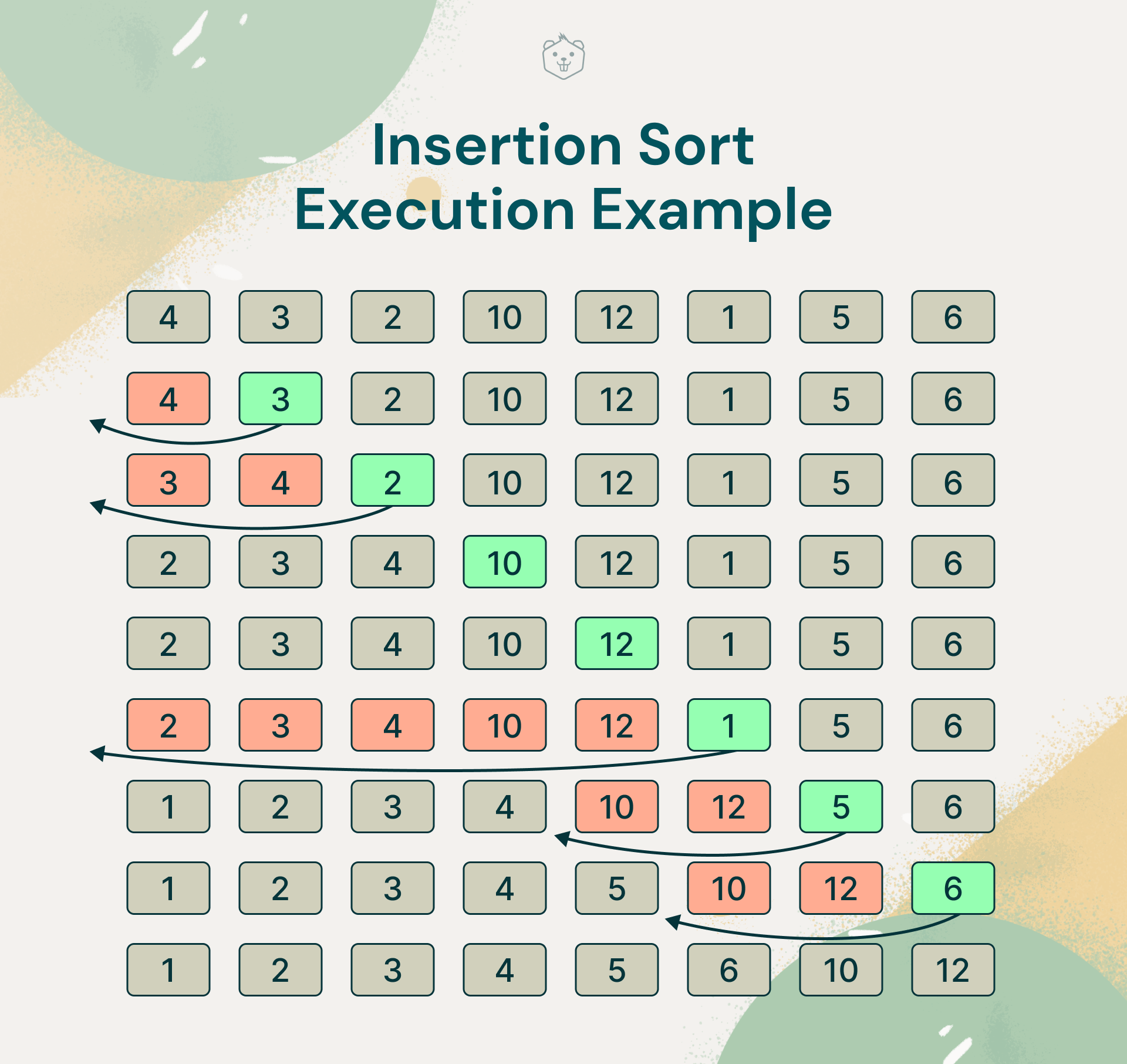

Insertion sort is a sorting method that works by shifting each element in the array one at a time. It iterates through the input elements, expanding the sorted array each time by comparing the current element to the sorted array's greatest value.

If the current element is greater, it remains in place and continues to the next element; otherwise, it locates the element's appropriate location in the sorted array and transfers it there.

Finally, it moves any elements in the sorted array that are greater than the current element one place forward.

Try it yourself

Insertion sort strongly resembles how we sort playing cards. To understand how, simply pick every subsequent card from a deck and place it in its proper position by comparing its value.

Another real-world example of insertion sort is how tailors arrange shirts in a closet. They always keep them in sorted order of size so that they can insert new shirts into the proper spot very quickly by shifting existing shirts forward to make room for the new shirt.

Insertion sort algorithm

Step 1: The first element is assumed to be already sorted

Step 2: Select the next element.

Step 3: Compare all elements in the sorted sublist with the element to be sorted.

Step 4: Shift all elements in the sorted sub-list that are greater than the value to be sorted.

Step 5: Fill in the value.

Step 6: Continue until the list is sorted.

Insertion sort pseudocode

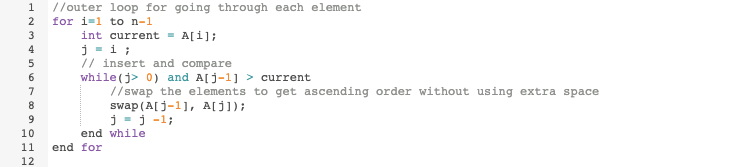

Assume that the first element is already sorted, and begin the iteration from index = 1 (in a zero-based array).

This algorithm can also be implemented recursively, such that the function calls itself, as follows:

Now that you know the algorithm and the pseudocode, try implementing insertion sort on your own. You may take help from the code snippets provided. Feel free to reach out to us in the comments below in case you get stuck.

Insertion sort in C

Insertion sort in C++

Insertion sort in Java

Insertion sort in Python3

Time complexity analysis of insertion sort

The best case input to an insertion sort function is an array sorted in the required order (here, ascending). In such cases, the algorithm simply compares the values of the ith element and the sorted subarray. Thus we get a linear time complexity, i.e., O(n).

The worst-case input is an array sorted in the opposite order (here, descending). In such cases, the algorithm compares the ith elements to every value in the sorted subarray and shifts every element of the subarray one position forward, thus resulting in quadratic time complexity, i.e., O(n2).

The average case complexity of insertion sort is also quadratic. Hence insertion sort is not preferred for large data sets. However, insertion sort is the fastest algorithm for sorting small arrays. Note that while there is no concrete threshold value to define small and large arrays, it may lie anywhere between 10 to 50 elements.

Space complexity analysis of insertion sort

Space complexity is the same for all cases, i.e., O(1). Hence, no auxiliary memory is required.

Comparison of insertion sort with other sorting algorithms

Insertion sort is similar to selection sort; the primary difference is that the ith iteration in insertion sort gives the sorted subarray from the i elements input to it, whereas, in selection sort, the ith iteration gives the i smallest elements in the entire array.

Although bubble sort has the same time complexity, i.e., O(n2), it is far less efficient than both insertion and selection sort. While some divide-and-conquer algorithms, such as Quick Sort and Merge Sort, outperform insertion sort for larger arrays, non-recursive sorting methods such as insertion sort or selection sort are often quicker for small arrays.

Enhancements on insertion sort

Method 1:

Algorithm:

- Just compare the ith element with (i-1)th element.

- If ith > (i-1)th, then simply append the element to the list. Else

- Compare ith element with ptr (i.e., the first element that is smallest in the current sorted list). We know that A[0] to A[i-1] is already sorted, and ptr points to A[0].

- If ith < ptr, then insert the ith element before ptr and point ptr to this element. Swap the remaining list accordingly. Else

- If the element lies between the 1st and the (i-1)th element, then we further divide the total number of sorted elements by 2 i.e. k = ceil(i/2).

- Now compare the ith element with the kth element and check again.

- If ith < kth, then continue comparing with smaller elements until we find an element kth < ith. Compare ith element with (k+1)th and swap.

- If ith > kth then continue comparing with greater elements until we find an element kth > ith. Compare ith element with (k-1)th and swap.

Using this method, the average and worst-case complexity can be reduced to O(n^1.585) from O(n^2) as the number of comparisons reduces significantly.

Method 2:

Algorithm:

- Start with the first and last elements of the array i.e. A[0] and A[n-1] where n is the length of the array.

- Compare A[0] and A[n-1]. If A[0]>A[n-1], swap their values. Else continue.

- Compare A[1] and A[n-2] and swap if A[1] > A[n-2].

- Repeat this process until the two middle elements are processed or only one middle element remains.

- Execute standard insertion sort on this array.

This algorithm makes insertion sort more practical for larger list sizes by significantly reducing the number of comparisons.

Congratulations! You have mastered insertion sort for your next big interview! If you haven’t already, try implementing insertion sort yourself. Trust us! It is worth the effort :)

Sorting algorithms may seem dreary or complex at first glance. If this is the case with you, don’t worry, we’ve got your back! Check out our simple yet comprehensive summary of the Top 10 Sorting Algorithms.

Happy Coding!