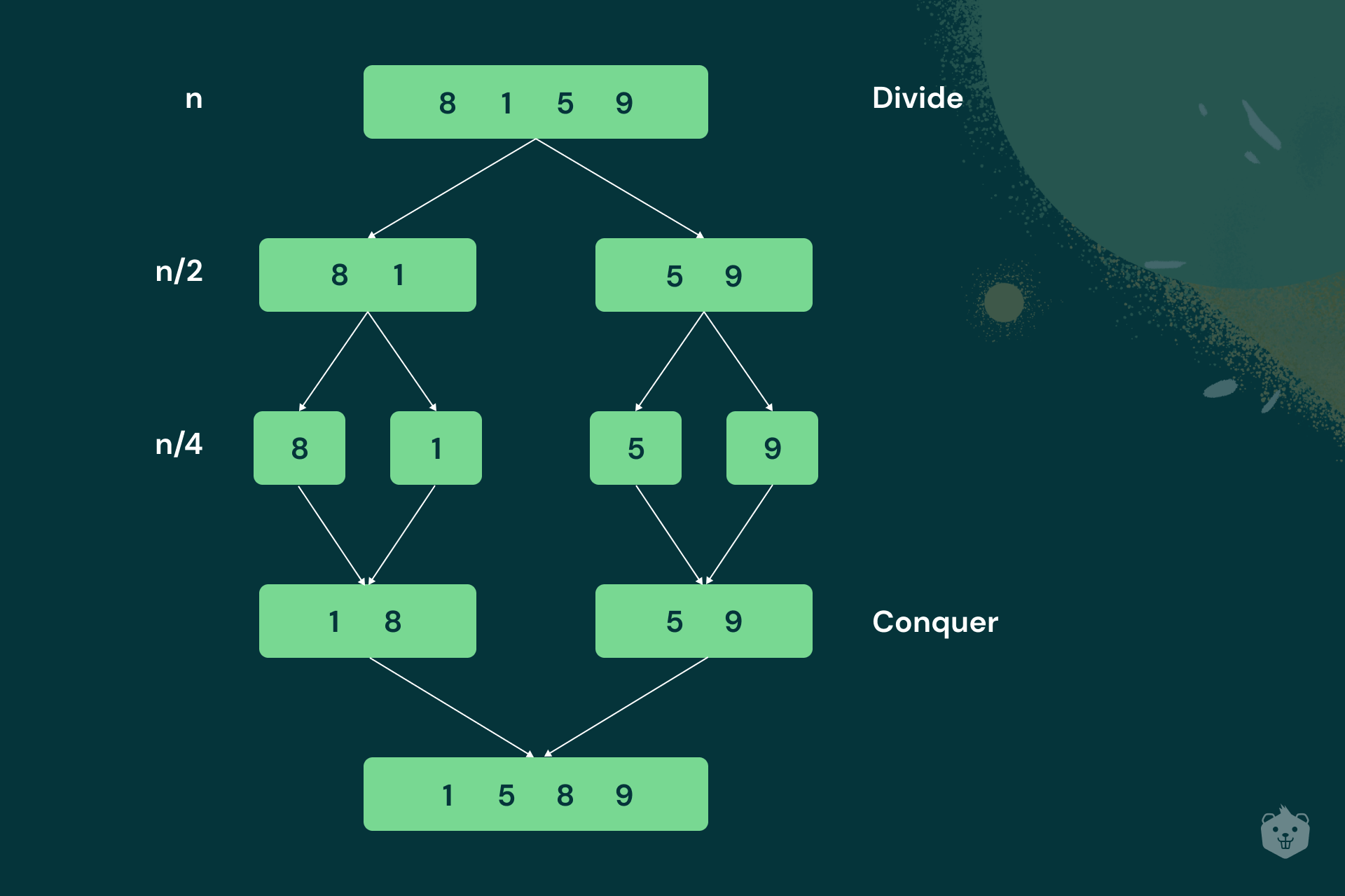

Merge Sort is the perfect example of the Divide and Conquer algorithm design.

Given an array, it divides the array into two halves, sorts these two halves recursively, and then, it merges the two smaller sorted arrays into a larger sorted array.

Amongst popular sorting algorithms, Merge Sort stands out for a reason. Do you know why?

Its time complexity is impressive!

In this blog, you will gain a thorough understanding of Merge Sort and try out fun activities to get hands-on with this amazing problem-solving algorithm.

Without further ado, let’s dive in and learn all about Merge Sort!

Merge Sort’s Divide and Conquer Algorithm Design

Divide and conquer is a popular algorithm design technique where a huge or cumbersome problem is broken down into simpler, solvable subproblems. The solutions of the subproblems are combined to solve the original problem.

Let us see how this logic is applied in Merge Sort!

You would have come across Merge Sort examples in the following way:

Although this is the big picture, it does not clearly define how the recursive calls occur.

Take a look at the pseudocode:

Mergesort (A[0..n-1])

//Input: An array A[0..n-1]

//Output: Array A[0..n-1] sorted in increasing order

//If the array contains just one element, or if it is empty, it is already sorted

If n < 2

return

else

//copy first half of the array A to an array B

copy A[0..floor(n/2) - 1] to B[0..floor(n/2) - 1]

//copy second half of the array A to an array C

copy A[floor(n/2)..n - 1] to C[0..floor(n/2) - 1]

//recursively divide array B until base case is reached

Mergesort(B[0..floor(n/2) - 1)

//recursively divide array C until base case is reached

Mergesort(C[0..floor(n/2) - 1)

//Merge sorted arrays B and C back into A

Merge (B,C,A)

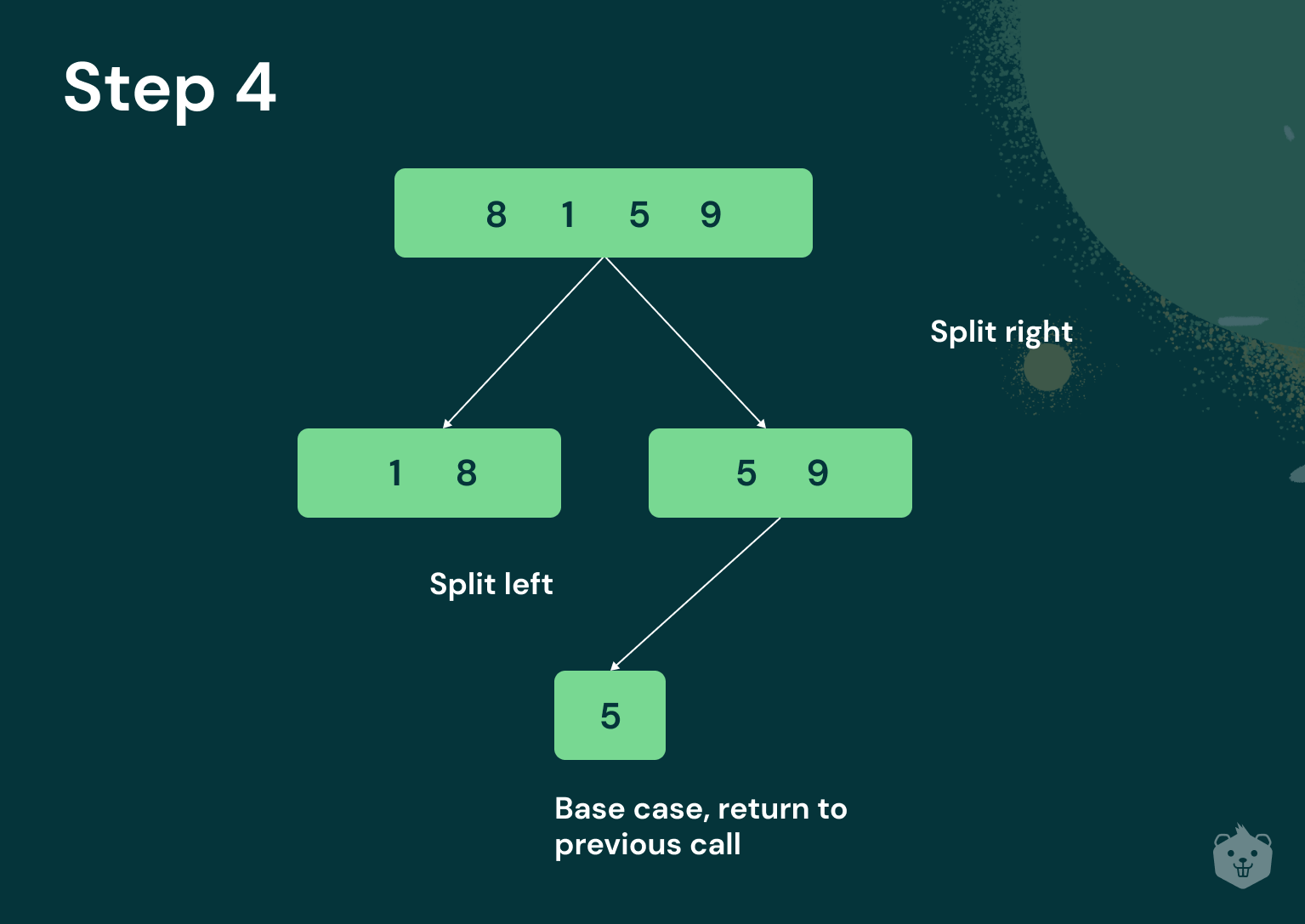

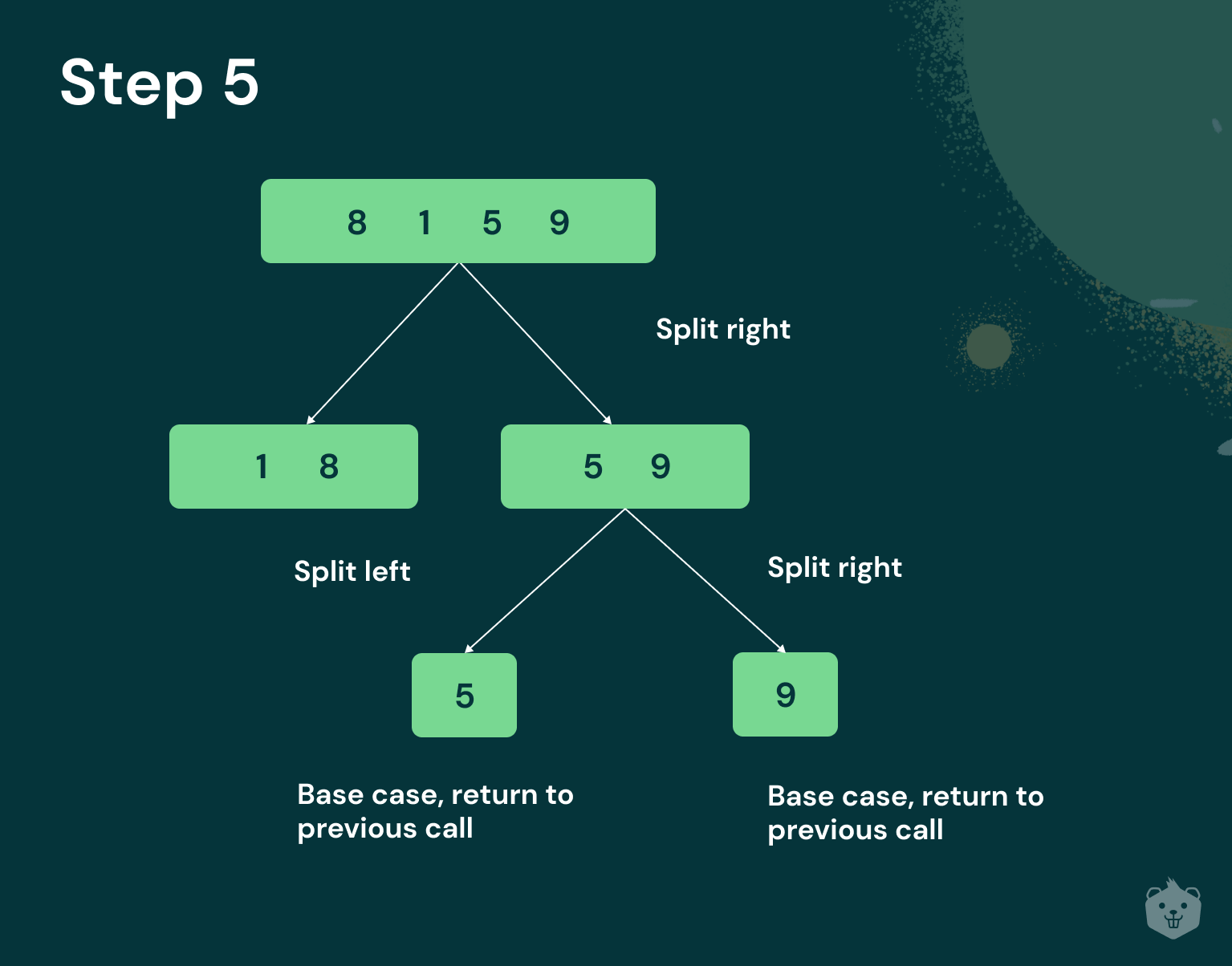

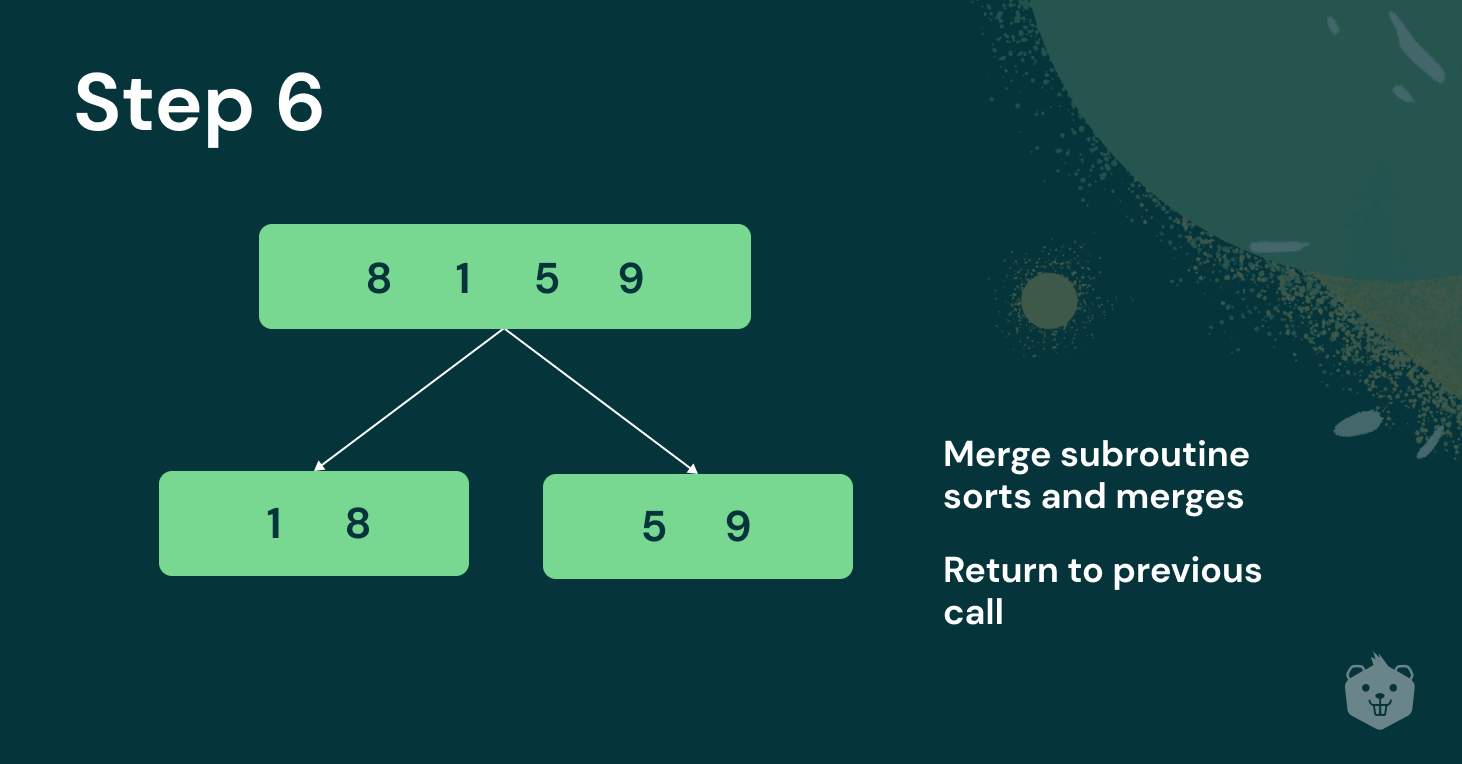

In Merge Sort, you must keep splitting the array until you reach the base case. That is, until you have only one item. One item is considered to be a sorted array.

An empty array also falls under the base case. It is sorted.

Recursion

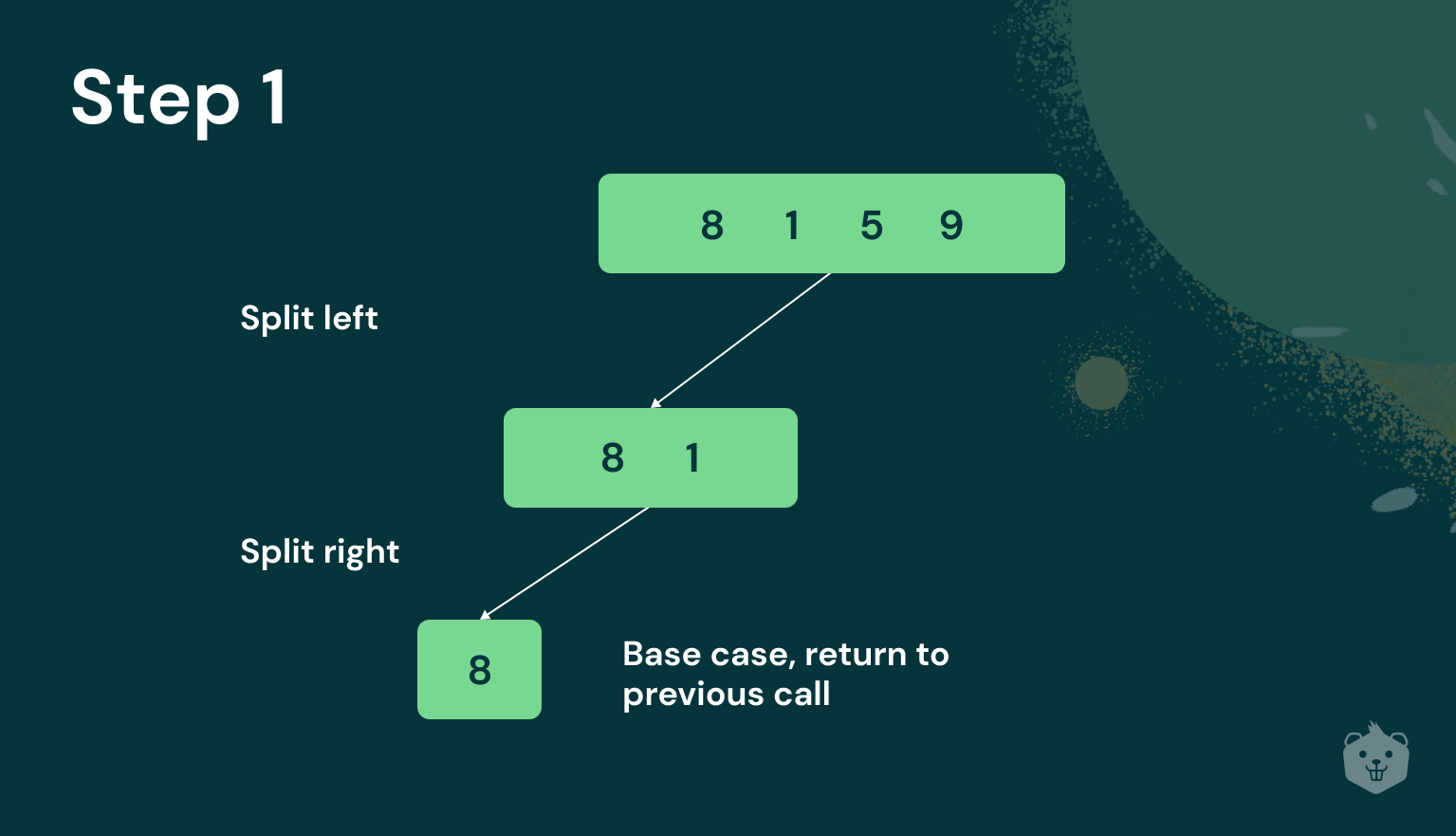

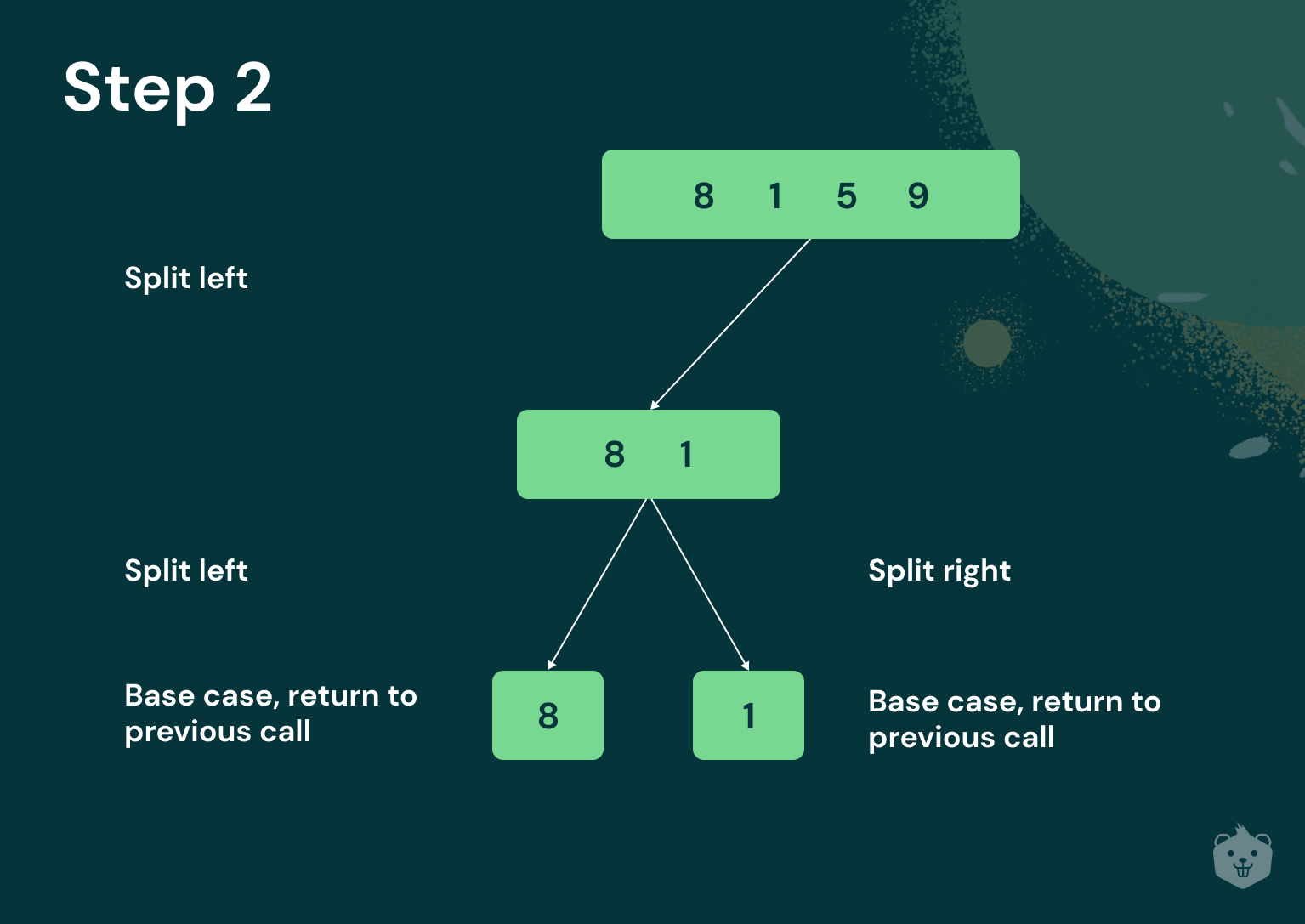

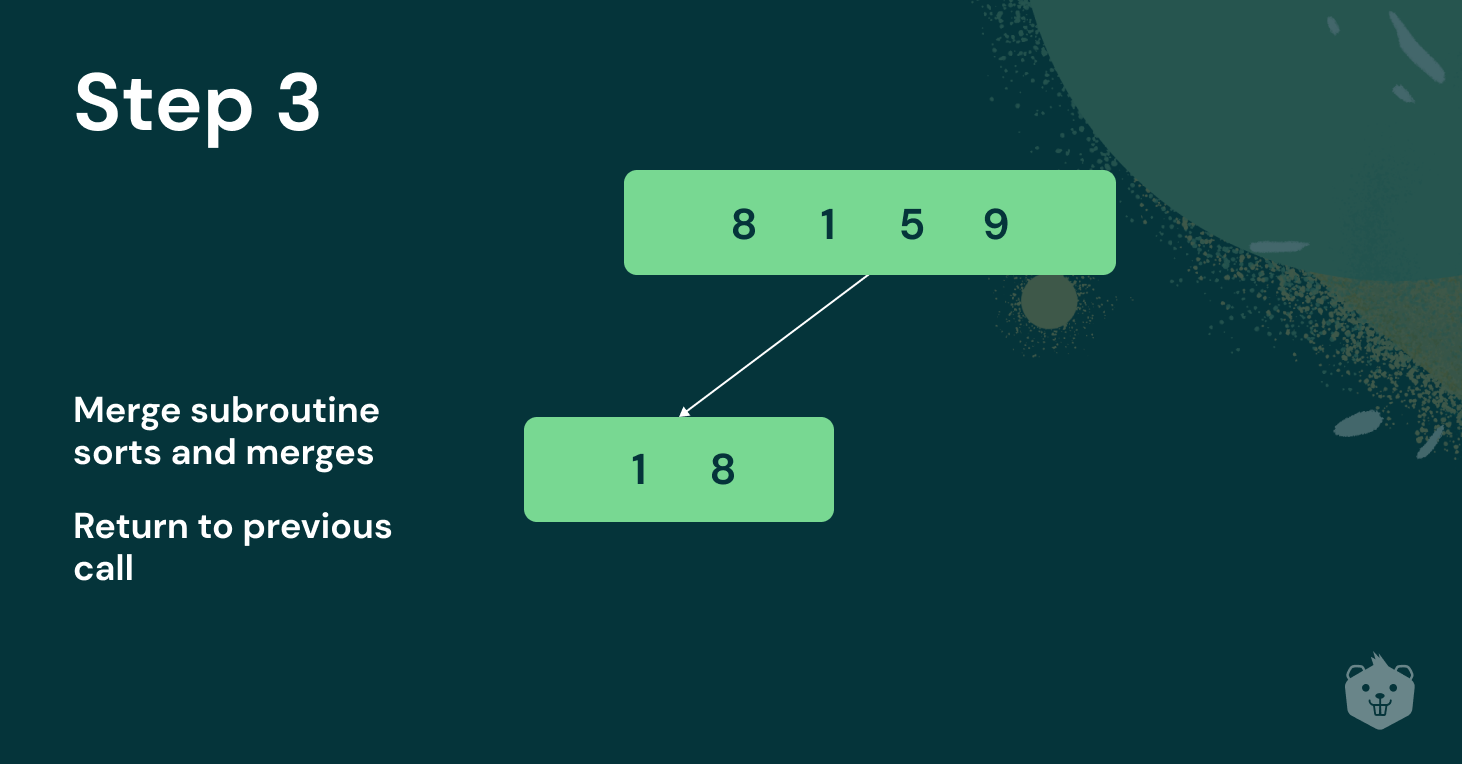

Merge Sort has a recursive function that splits the array recursively until the base case is reached.

Merge subroutine

It also has a merge subroutine that compares each element in two arrays, at every level of division, sorts, and then merges them.

Notice the operation ‘floor’ in the pseudocode. This is to make sure that Merge Sort works for an odd number of input sizes as well. (Ex: floor(5.4) = 5)

Tracing the flow of Merge Sort

Implementation of Merge Sort in Python

def merge_sort(array, l_index, r_index):

#recursive function

if l_index < r_index:

mid = (l_index + r_index)//2

merge_sort(array, l_index, mid)

merge_sort(array, mid + 1, r_index)

merge(array, l_index, r_index, mid)

#merge subroutine

def merge(array, l_index, r_index, mid):

#Merge Sort needs auxiliary space for merging. L_half and r_half are two arrays that #are used to store elements after each comparison made during sorting

l_half = array[l_index:mid + 1]

r_half = array[mid+1:r_index+1]

l_half_index = 0

r_half_index = 0

sorted_index = l_index

#compare sorted left and right subarrays and merge them

while l_half_index < len(l_half) and r_half_index < len(r_half):

if l_half[l_half_index] <= r_half[r_half_index]:

array[sorted_index] = l_half[l_half_index]

l_half_index = l_half_index + 1

else:

array[sorted_index] = r_half[r_half_index]

r_half_index = r_half_index + 1

sorted_index = sorted_index + 1

#there could be elements still left after each comparison has been made

for i in range(l_half_index,len(l_half)):

array[sorted_index] = l_half[i]

sorted_index = sorted_index + 1

for i in range(r_half_index,len(r_half)):

array[sorted_index] = r_half[i]

sorted_index = sorted_index + 1

merge_sort(array, 0, len(array) -1)

print(array)

Input

array = [33,1,60,14,16,1,800]

Output

[1, 1, 14, 16, 33, 60, 800]

Test your understanding

Q1 Given the following list of numbers, [80, 1, 40, 22, 10, 11, 70, 13, 2, 13, 10, 65, 13, 15, 16, 7] which answer illustrates the list to be sorted, after three recursive calls to merge_sort?

- [2, 13, 10, 65, 13, 15, 16, 7]

- [80,1]

- [80, 1, 40, 22]

- [80]

Q2 Given the following list of numbers, [80, 1, 40, 22, 10, 11, 70, 13, 2, 13, 10, 65, 13, 15, 16, 7] which answer illustrates the first two lists to be merged?

- [80, 1] and [40, 22]

- [1, 10, 11, 13, 22, 40, 80] and [2, 7, 10, 13, 13, 15, 16, 65]

- [80] and [1]

- [2] and [7]

Q3 What would be the first left split of the array [8, 1, 5, 10, 11, 2, 6] according to the pseudocode we saw earlier?

- [8, 1, 5]

- [8, 1, 5, 10]

Time Complexity Analysis of Merge Sort

How does Merge Sort have Θ(n*logn) time complexity?

Firstly, Merge Sort makes n - 1 comparisons during sorting. For example, consider two arrays

[1, 3] and [2, 4]

Compare 1 with 2. 1 < 2, hence 1 is added into the array.

Compare 3 with 2. 2 < 3, hence 2 is added into the array.

Compare 3 with 4. 3 < 4, hence 3 is added into the array.

4 is the only element left, it is added into the array.

Now, you have n - 1 comparisons.

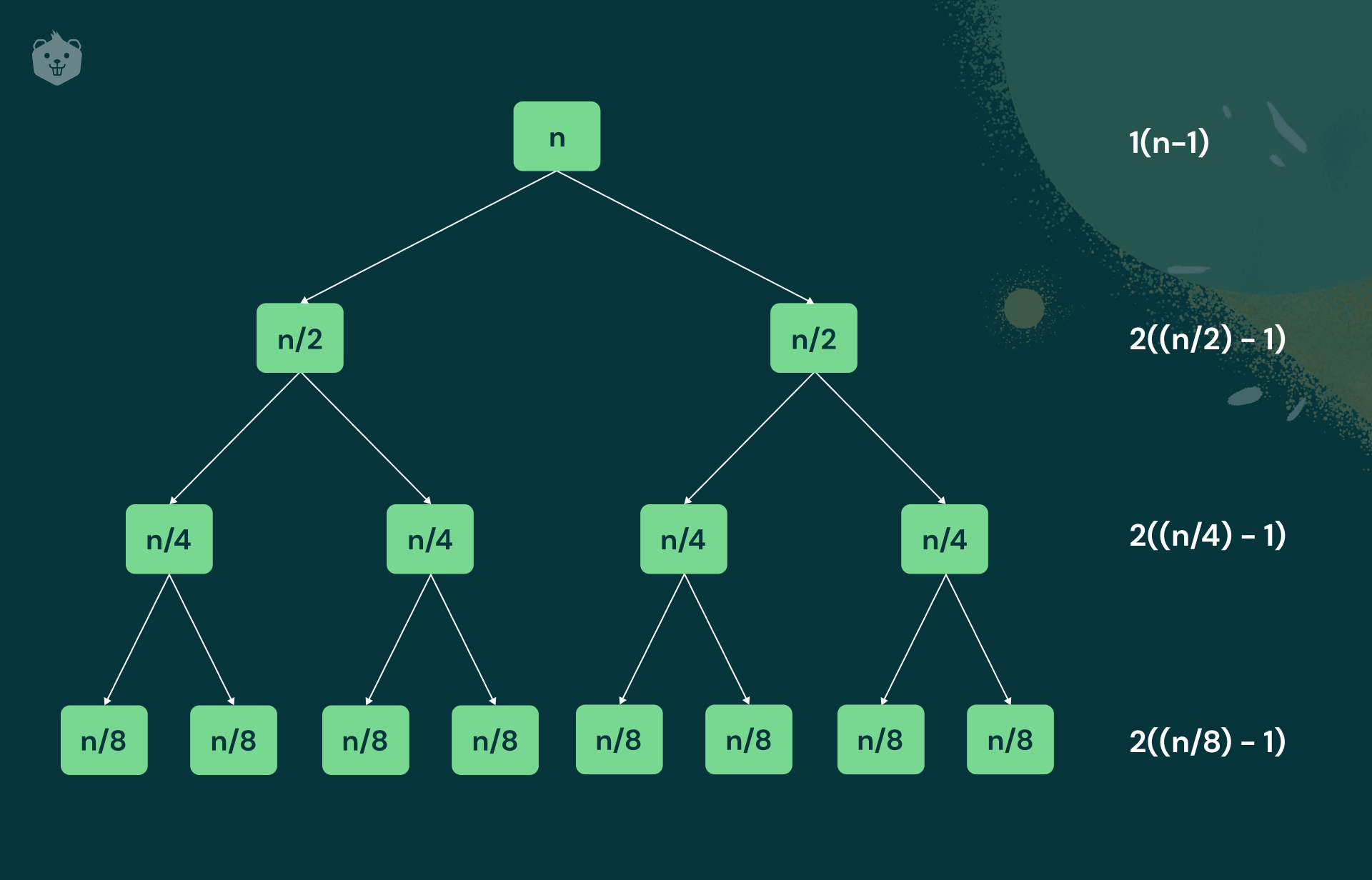

Consider an input of size n = 8

The figure above demonstrates that for an input size of 8, three levels of division are needed. Thus the number of levels of division is log2(8) = 3

Writing log as a function of n, we get log2(n).

For n = 2k if you keep dividing further, at each level, you can generalize the above calculations as ∑ 2k[n/2k-1] with lower bound as k = 0 and upper bound as log(n) - 1

It results in Θ(n*logn)

Worst case: Assume for the sake of simplicity, that n is a power of 2. Let C(n) be the number of key comparisons made. The equation below defines the amount of work that needs to be done to divide and merge the arrays.

C(n) = 2C(n/2) + Cmerge (n)

C(n/2) is the number of comparisons at each left and right split.

C(1) = 0 (For just one element no comparisons are made)

Cmerge (n) represents the number of comparisons made while merging.

At each step, exactly one comparison is made, after which, the total number of elements in the two arrays still needed to be compared is reduced by one.

In the worst case, neither of the two arrays becomes empty, before the other one contains just one element.

Hence, for the worst case, Cmerge (n) is n - 1

An example of an array that would be the worst case scenario for Merge Sort is [1, 9, 5, 13, 3, 11, 7, 15]..

Cworst(n) = 2Cworst(n/2) + n - 1

Using master’s theorem, you can conclude that

Cworst(n) = nlog2n + n - 1

Best case: In the best case scenario, the array is already sorted, but we still have to split and recombine it back.

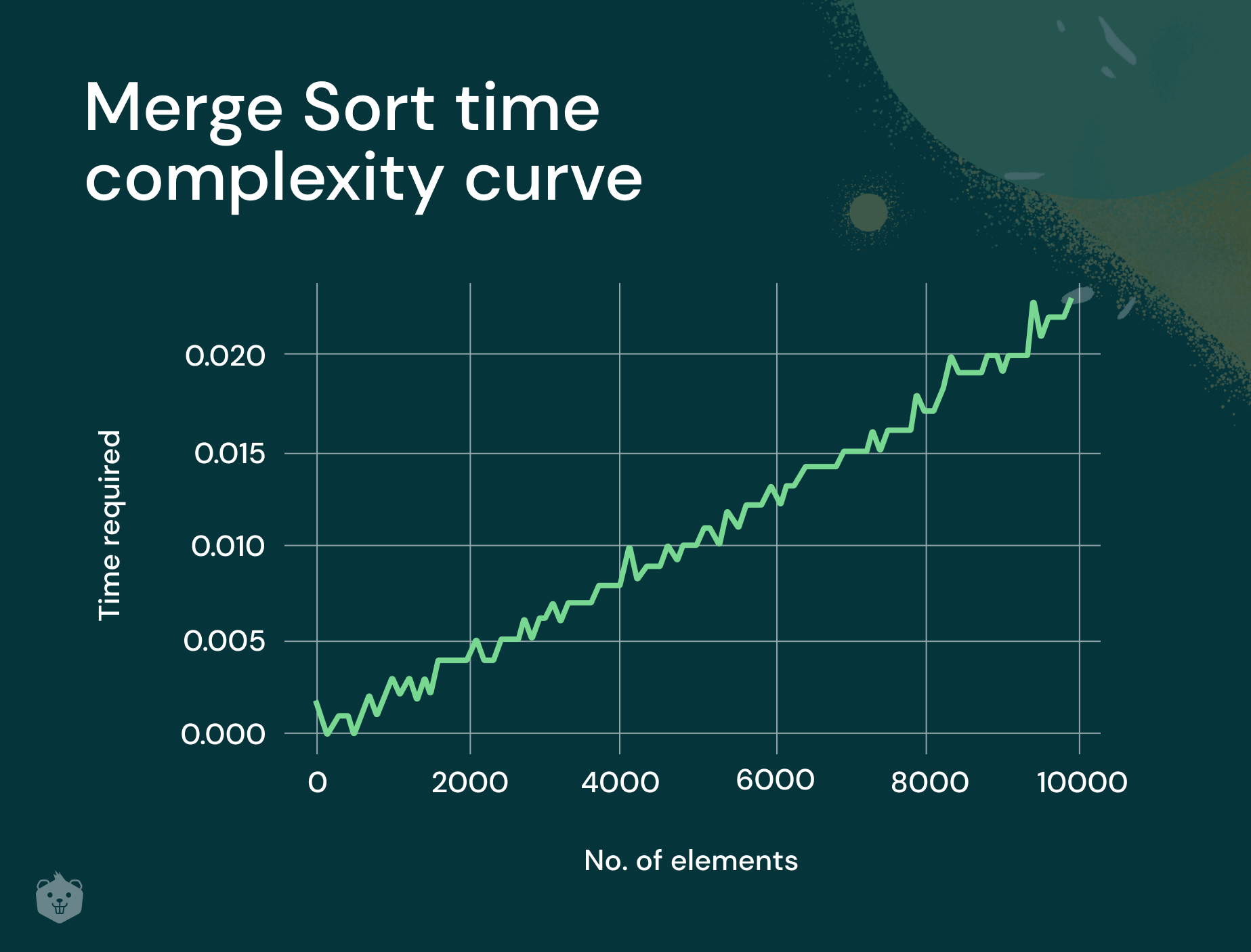

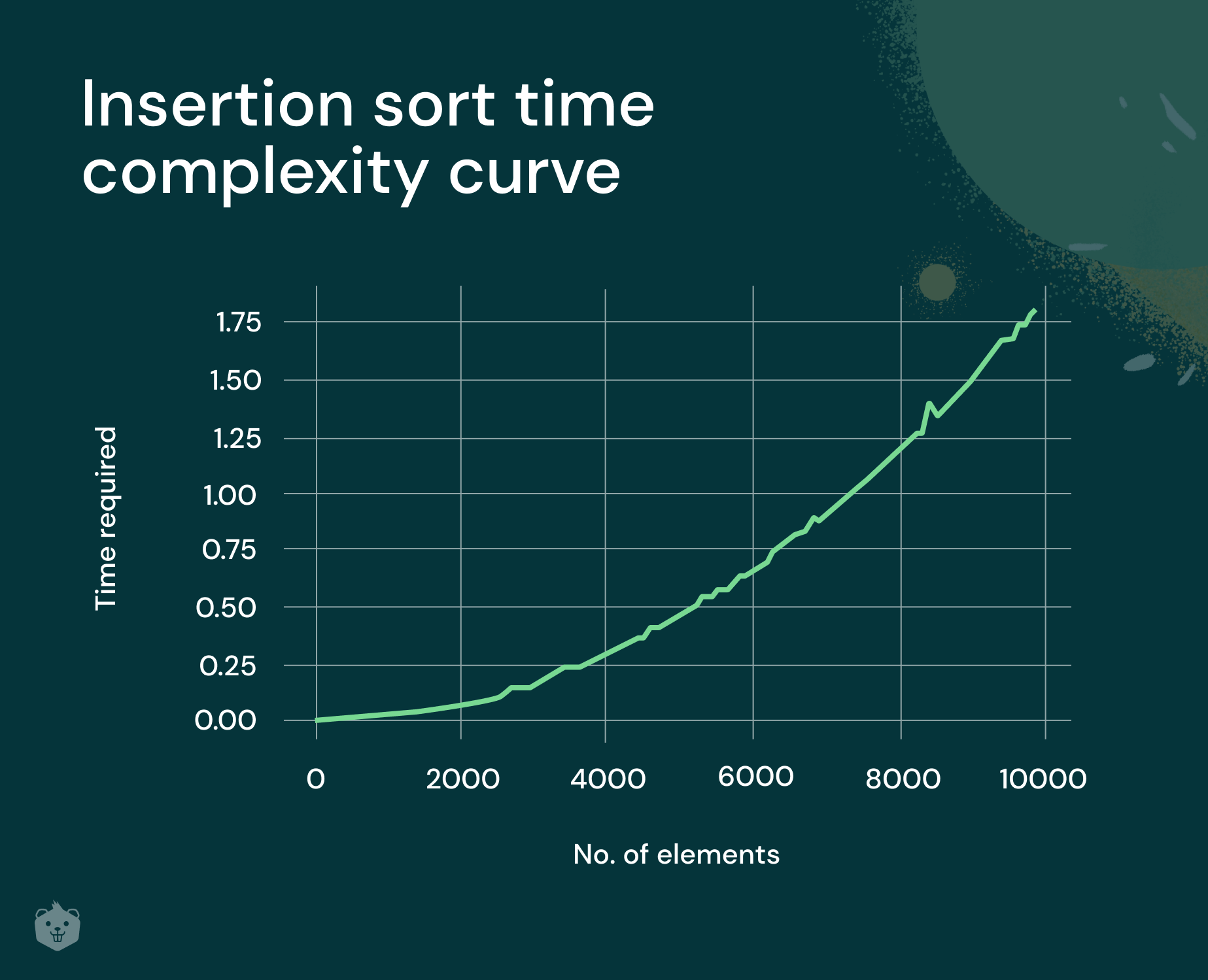

Time complexity curve plot using Python

The merge_sort function that we wrote previously, is used to plot the curve for random input.

Python’s random module is used to generate random numbers as input.

Time module is used to measure how much time was taken for merge_sort to finish execution. Here we plot a graph of Input size against Time taken.

import time

from random import randint

arr1 = [randint(0,1000) for i in range(10000)]

times=[]

for x in range(0,10000,100):

start_time = time.time()

arr2 = (arr1[:x])

merge_sort(list2,0, len(arr2)-1)

elapsed_time = time.time() - start_time

times.append(elapsed_time)

elapsed_time

x=[i for i in range(0,10000,100)]

import matplotlib.pyplot as plt

%matplotlib inline

plt.xlabel("No. of elements")

plt.ylabel("Time required")

plt.plot(x,times)

Selection Sort’s time complexity clearly appears to be quadratic. For larger values of input size, Merge Sort’s time complexity curve can be enhanced.

Take a deeper look at Merge Sort

Analysis of sorting algorithms needs three things to be considered. They are time complexity, space complexity, and stability.

Time complexity

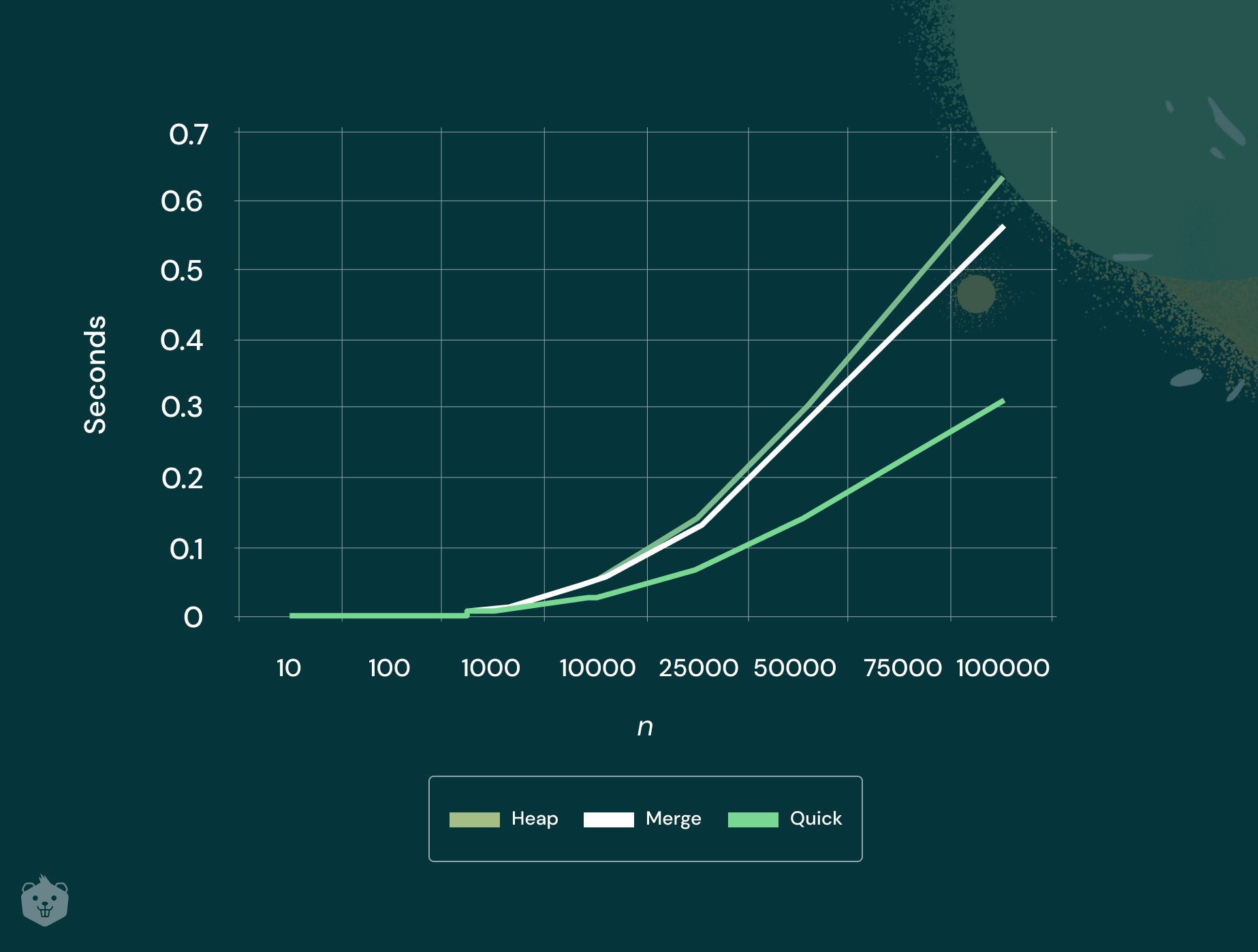

Merge Sort has an efficient time complexity of Θ(n*logn). But, quicksort is still faster, as long as a random pivot is chosen.

Space complexity

Merge Sort, like other sorting algorithms, does not work in-place.

An in-place algorithm is a sorting algorithm in which the sorted items occupy the same storage as the original ones.

But Merge Sort makes copies of the left and right half of the given array. It requires a linear amount of extra storage space.

Stability

Merge Sort is a stable algorithm. An algorithm is said to be stable when it keeps elements with equal values in the same relative order in the output as they were originally, in the input.

The efficiency of Merge Sort, apart from it having a great time complexity, is that you don't need to read all the data in memory at once. Because of the divide-and-conquer nature of the algorithm, it can be easily parallelized.

Applications of Merge Sort

Sorting linked lists

You have seen merge sort being implemented using arrays. However, in practice merge sort works better with linked lists. This is because you don’t have to store elements linearly in linked lists.

Items to a linked list can be inserted in the middle in Θ(1) extra space and Θ(1) time. Hence, merge sort is more efficient when linked lists are used.

Counting inversions in an array

Inversion counting is about checking how far, or close an array is from being sorted.

E- commerce websites have a section - "You might like.”

To display the options under this, they maintain an array for all the user accounts.

Merge sort helps to check the least number of inversions count with your array of choices. Based on this, they will recommend to you about what other users bought or liked.

External Sorting

In practice, enormous amounts of data is processed. Sorting such huge amounts of data needs additional, external memory. Usually an external hard drive is used, when data does not fit in memory. When implementing External Sorting, merge sort plays a crucial role.

Test your understanding

Q4 Merge Sort requires an auxiliary space of

- Θ(1)

- Θ(n)

Q5 The input size of the array to be sorted with Merge Sort is 9. How many levels of division does Merge Sort do?

Q6 Say you have two sorted lists of size m and n. The number of comparisons in the worst case by the Merge Sort algorithm will be

- m x n

- m + n

- m + n - 1

Try it yourself

- Apply Merge Sort to sort the list L, E, A, R, N, B, Y, D, O, I, N, G in alphabetical order

- Implement Merge Sort using linked lists

- Use python’s pygorithm module to learn more about all sorting algorithms.